Some properties for the Exec Source are: PropertyĪ shell invocation used to run the command. On the other hand, the date will probably not produce the desired result. These two commands produce streams of data. Thus configurations such as cat or tail -F will produce the desired results. If for any reason the process exits, then the source also exits and will not produce any further data. Unless the property logStdErr is set to true, stderr is simply discarded. It expects that process to continuously produce data on stdout. This Apache Flume source Exec on strat-up runs a given Unix command. It specifies the keytab location used by the Thrift Source in combination with the agent-principal to authenticate to the Kerberos KDC. It specifies the Kerberos principal used by the Thrift Source to authenticate to the Kerberos KDC. In secure mode, Thrift source will accept connections only from those Thrift clients who are having Kerberos enabled and successfully authenticated to the Kerberos KDC. In Kerberos mode, for successful authentication agent-principal and agent-keytab are required. It is set to true to enable Kerberos authentication. It specifies whether to enable Kerberos security or not. Some of the properties of Thrift source are: Property Name The thrift source uses two properties the agent-principal and agent-key tab to authenticate to the Kerberos KDC.

We can do so by enabling Kerberos authentication. We can configure the Thrift source to start in a secure mode. When a Thrift source gets paired with the built-in Thrift Sink on the different Flume agent, then it could create tiered collection topologies. Thrift Source receives events from the external Thrift client. The compression-type should match the compression-type of the matching AvroSource.Įxample for agent named agent1, source src, and channel ch1: agent1.sources = src Specify the maximum number of worker threads to spawn Specify the hostname or IP address to listen on. Specify the channels through which an event travels. Some of the properties for Avro source are: Property Name When an Avro source is paired with the built-in Avro Sink on another Flume agent, then it can create tired collection topologies. It listens to it through the Avro port number.

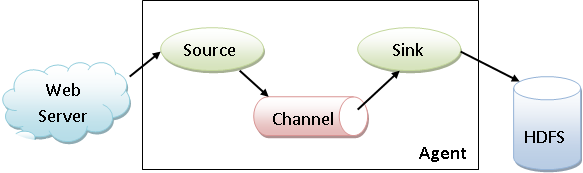

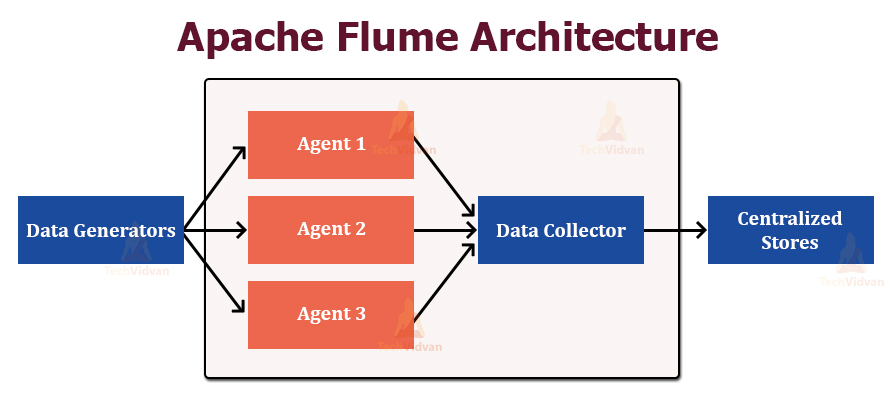

(Sender will not commit its transaction till it receives a signal from the receiver.Avro source receives events from the external Avro client stream. After receiving the signal, the sender commits its transaction. Soon after receiving the data, the receiver commits its own transaction and sends a “received” signal to the sender. In Flume, for each event, two transactions take place: one at the sender and one at the receiver. The data flow in which the data will be transferred from many sources to one channel is known as fan-in flow. Multiplexing − The data flow where the data will be sent to a selected channel which is mentioned in the header of the event. Replicating − The data flow where the data will be replicated in all the configured channels. The dataflow from one source to multiple channels is known as fan-out flow. Within Flume, there can be multiple agents and before reaching the final destination, an event may travel through more than one agent. The following diagram explains the data flow in Flume. Just like agents, there can be multiple collectors in Flume.įinally, the data from all these collectors will be aggregated and pushed to a centralized store such as HBase or HDFS. The data in these agents will be collected by an intermediate node known as Collector. These agents receive the data from the data generators. Generally events and log data are generated by the log servers and these servers have Flume agents running on them. Flume is a framework which is used to move log data into HDFS.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed